It’s a Tuesday afternoon. Your team has been working on a critical feature for three weeks. The release window is tonight. Then a Jenkins plugin update breaks the pipeline. Two engineers drop everything to debug Groovy scripts and chase down plugin compatibility errors. By morning, the release has slipped – again.

If that sounds familiar, you’re not alone. Across engineering teams worldwide, this story plays out constantly. According to DORA (DevOps Research and Assessment), elite teams using automated CI/CD pipelines deploy code 973x more frequently and recover from incidents 6,570x faster than low-performing counterparts.

And as pressure mounts to ship faster, the gap between what traditional CI/CD tools offer and what modern delivery demands has never been wider.

The shift from manual, maintenance-heavy pipelines to AI-powered CI/CD platforms isn’t just a technical upgrade, it’s a business decision. This post breaks down why Jenkins is holding teams back, what the hidden costs really look like and how a modern agentic software delivery platforms like BuildPiper changes the equation.

The Problem: Why Jenkins Is Slowing Teams Down

Jenkins has been a cornerstone of continuous integration and continuous deployment for over a decade. In its time, it was genuinely transformative. But the engineering world has moved on and Jenkins, largely, hasn’t.

Here’s what engineering leaders are dealing with in practice:

1. Complex plugin management

Jenkins relies on a sprawling ecosystem of plugins over 1,800 of them. Every capability you need, from Docker support to Slack notifications, requires a separate plugin. And plugins don’t always play nicely together. Jenkins plugin compatibility issues are a recurring source of pipeline failures and every update is a potential risk.

2. Constant scripting work

Writing and maintaining Jenkinsfiles is a specialist skill. Teams spend hours crafting Groovy-based pipelines, troubleshooting syntax errors and updating scripts every time infrastructure or requirements change. This is pure engineering overhead with zero customer value.

3. Security vulnerabilities

Jenkins’s plugin-heavy architecture creates a broad attack surface. Jenkins security vulnerabilities are documented regularly and keeping everything patched across dozens of plugins demands dedicated attention.

4. High maintenance overhead

Jenkins servers need to be provisioned, updated, monitored and scaled manually. The Jenkins maintenance overhead alone can consume a meaningful portion of a DevOps team’s bandwidth, time that could go toward improving delivery, not sustaining infrastructure.

5. The business impact is real

Delayed releases mean delayed revenue. Frequent Jenkins pipeline issues erode confidence in the delivery process. And relying on a small group of Jenkins experts creates a talent dependency risk that’s hard to manage as teams grow.

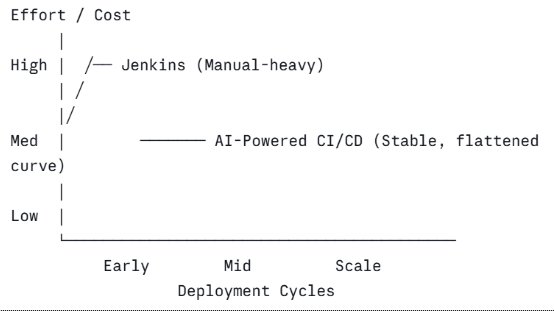

Visual Insight: Manual CI/CD vs. Automated CI/CD

What this shows: With Jenkins, costs and effort scale upward as teams grow and pipelines multiply. Every new service, environment or team member adds complexity. With an AI-powered approach, onboarding new pipelines is largely automated so the cost curve flattens even as deployment frequency increases. Manual systems scale poorly. Automation reduces long-term cost and effort.

The Hidden Cost of Manual CI/CD

The real cost of Jenkins isn’t just the tooling, it’s the cumulative drain on engineering time, talent and velocity.

Time spent managing pipelines. Studies in the DevOps industry consistently show that teams running traditional pipelines spend a disproportionate share of their week on maintenance tasks (writing scripts, managing plugins, fixing broken builds) rather than shipping features.

Cost of failed deployments. Every failed deployment has a cost: engineering time to diagnose, potential downtime and delayed delivery. Manual configuration errors are one of the leading causes of deployment failures and they’re largely avoidable with proper automation.

The DevOps expertise gap. Jenkins requires experienced engineers to set up and maintain effectively. That’s a bottleneck. If your Jenkins expert is on leave, sick or moves to another company, your pipeline operations are at risk.

Comparison: Jenkins vs. AI-Powered CI/CD

| Factor | Jenkins (Traditional CI/CD) | AI-Powered CI/CD |

|---|---|---|

| Setup Time | High | Low |

| Maintenance | Continuous effort | Minimal |

| Error Rate | High (manual scripts) | Low |

| Deployment Speed | Slower | Faster |

| Cost Efficiency | Declines over time | Improves over time |

The goal is to reduce CI/CD operational costs and reduce deployment time in a way that’s sustainable as your engineering org scales. Continuous integration testing tools that require constant human attention work against that goal.

The Rise of AI-Powered CI/CD

The category of AI-powered CI/CD pipelines has matured significantly in recent years. The core idea is straightforward- remove as much manual work as possible from the delivery pipeline and use intelligent automation to handle what humans traditionally had to do by hand.

In practical terms, this means:

- Auto pipeline creation – Instead of writing pipeline-as-code from scratch, the platform generates pipelines based on your project structure and configuration.

- Smart error detection – Instead of debugging cryptic build logs, the system surfaces the root cause and in some cases, suggests or applies a fix automatically.

- Predictive deployment optimization – AI-assisted platforms can flag risky deployments before they happen, based on patterns from past releases.

The result is a fundamentally different operating model. Automated deployment tools at this level reduce human dependency without reducing engineering control. The best CI/CD platforms today are built around this principle: intelligently automate the routine, so engineers can focus on the exceptional.

How BuildPiper Solves These Challenges

BuildPiper was designed specifically for the challenges modern engineering teams face, not as a patch on legacy tooling, but as a ground-up rethink of what a best agentic software delivery platform should do.

Here’s where it changes the equation:

1. No manual scripting

BuildPiper eliminates the need to write and maintain pipeline scripts. Pipelines are configured through a guided interface, with automation handling the underlying logic. Engineers don’t need to be Groovy specialists to operate it.

2. Reduced plugin dependency

BuildPiper reduces plugin dependency by offering pre-built, ready-to-use integrations and capabilities natively within the platform, eliminating the need to install and manage dozens of separate Jenkins plugins.

Instead of handling constant plugin updates, compatibility issues and security patches, teams can simply use BuildPiper’s built-in features or customize workflows as needed. If a custom plugin is required, it’s as simple as creating a script, packaging it into a Docker image and deploying it, making the process faster, cleaner, and far less complex.

3. Faster onboarding

New services and teams can be onboarded in hours rather than days. The platform is built to scale across microservices architectures without compounding complexity.

4. Built-in security and governance

Rather than chasing plugin patches, security controls are embedded in the platform itself, reducing the surface area for vulnerabilities.

5. Scalable pipelines that don’t require scaling the team

As deployment frequency grows, BuildPiper’s automation absorbs the additional load rather than passing it to engineers.

The comparison with Jenkins isn’t about one tool being “better” in the abstract, it’s about fit for purpose. Jenkins was built for a different era of software delivery. BuildPiper is built for the one we’re in now.

Business Impact: What the Switch Actually Delivers

For decision-makers, the outcomes matter more than the features. Here’s what engineering leaders consistently report after moving away from manual CI/CD:

Faster time-to-market. With automated pipelines and fewer manual steps, release cycles shorten. Features reach users faster and the business can respond to the market more quickly.

Reduced DevOps workload. Engineers spend less time on pipeline maintenance and more time on work that drives value. This is one of the clearest ways to reduce CI/CD costs without reducing team capability.

Lower operational costs. Fewer incidents, less debugging time and reduced dependency on specialist knowledge all translate directly to cost savings.

Improved release reliability. Automated pipelines are consistent. They don’t have bad days, forget steps, or make typos in config files. Reliability improves, and with it, confidence in the delivery process.

When Should You Move Away from Jenkins?

Not every team is ready to make the switch immediately, and that’s okay. But there are clear signals that the cost of staying is exceeding the cost of moving:

- Too many plugins to manage – If your team spends meaningful time tracking plugin updates and compatibility, that’s overhead you’re paying continuously.

- Frequent pipeline failures – If broken pipelines are a recurring story in your standups, the tool is creating risk, not reducing it.

- Increasing DevOps costs – If your CI/CD operational spend keeps climbing without a corresponding lift in delivery speed, the model isn’t scaling.

- Slow release cycles – If competitors are shipping faster and your pipeline is part of the reason, that’s a competitive disadvantage worth addressing.

- Knowledge concentration risk – If only one or two people truly understand your Jenkins setup, that’s a fragility your business shouldn’t accept.

Conclusion

The era of manually maintained CI/CD pipelines is winding down. Not because Jenkins isn’t functional, but because the engineering world has moved to a pace and complexity where manual effort at the pipeline level is a liability, not a feature.

The shift to intelligent, AI-powered CI/CD is about removing the operational drag that slows teams down, reduces reliability, and increases costs over time. Platforms like BuildPiper represent what modern delivery infrastructure looks like: automated where it should be, scalable by design, and built to reduce the gap between code complete and customer value.

If your current CI/CD tool is creating more work than it’s eliminating, it may be time to rethink the foundation.

Frequently Asked Questions

1. What are the limitations of Jenkins?

A. Jenkins requires significant manual effort to set up and maintain. Its plugin-based architecture introduces compatibility risks, security vulnerabilities and ongoing maintenance overhead. As teams and services scale, Jenkins complexity grows faster than the team’s capacity to manage it, leading to slower releases and higher operational costs.

2. What is an AI-powered CI/CD pipeline?

A. An AI-powered CI/CD pipeline uses intelligent automation to reduce manual work in the software delivery process. This includes auto-generating pipeline configurations, detecting and diagnosing errors automatically, and optimizing deployment patterns based on historical data. The goal is to make delivery faster and more reliable without requiring constant human intervention.

3. How do you reduce CI/CD operational costs?

A. CI/CD operational costs are reduced by minimizing manual scripting, reducing pipeline failures, shortening onboarding time for new services, and decreasing dependency on specialized DevOps knowledge. Moving from a manual-heavy tool like Jenkins to an automated platform directly addresses each of these cost drivers.

4. What are the best CI/CD platforms for scaling engineering teams?

A. The best CI/CD platforms for scaling teams are those that reduce complexity as deployment frequency increases rather than adding to it. Platforms with built-in automation, native integrations and minimal plugin dependency are better suited for modern microservices environments than legacy tools designed for simpler, single-application workflows.

Related Searches

- Automated RCA with Agentic AI: Faster Incident Resolution for DevSecOps

- Cloud-Native Application Development: A Full Lifecycle Approach